Contextual Document Assistant

RAG pipeline over PDFs and text: chunking, Universal Sentence Encoder embeddings, Elasticsearch, and LLaMA 3.3 for query rewriting and answers.

- Year

- 2025

- Team

- Ismael Meijide · Giuliano Bardecio · Joaquín Abeiro

Project Report: RAG System Implementation

1. Introduction

To implement the Retrieval-Augmented Generation (RAG) system, the following technical stack was defined:

- Environment: Python (Google Colab).

- Embeddings: Universal Sentence Encoder (USE).

- Vector Database: Elasticsearch.

- Chunking Strategy: Token-based segmentation.

- LLM: LLaMA-3.3-70B-Instruct-Turbo (for query expansion and response generation).

2. Development Process

2.1 Document Chunking

Large documents exceed model token limits and require splitting for precise semantic search.

- Token-based Chunking: Uses a maximum token count and an overlap parameter for context redundancy.

- Current Implementation: Uses sentence parsing combined with maximum word thresholds to maintain semantic consistency.

2.2 Embedding Generation

We evaluated multiple models, including all-MiniLM-L6-v2, Word2Vec, and Mistral-7B-v0.1.

- Selection: Universal Sentence Encoder (USE) was chosen for its superior balance of semantic accuracy and computational efficiency.

- Storage: Each chunk is stored as a

{chunk, embedding}tuple.

2.3 Vector Storage & Indexing

- Engine: Elasticsearch was selected for its reliability and efficient KNN (K-Nearest Neighbors) support.

- Optimization: We tuned the "m" parameter for node connections and tested multiple similarity metrics (Cosine, Dot Product, Euclidean).

- Indexing: Chunks are indexed with original text and Document IDs. We utilized

tqdmfor real-time progress tracking during the indexing phase.

2.4 Semantic Search & Query Expansion

To improve retrieval accuracy, the system follows these steps:

- Query Rewriting: The LLM refines the raw user input into a scientifically aligned query.

- Embedding: The refined query is transformed into a vector via USE.

- Search: Elasticsearch executes a KNN search to retrieve the top 5 most relevant chunks ($k=5$, $num_candidates=10$).

2.5 Final Response Generation

The system utilizes LLaMA 3.3 70B to:

- Process the retrieved context and the rewritten query.

- Construct a context-rich prompt.

- Generate an evidence-based, professional answer.

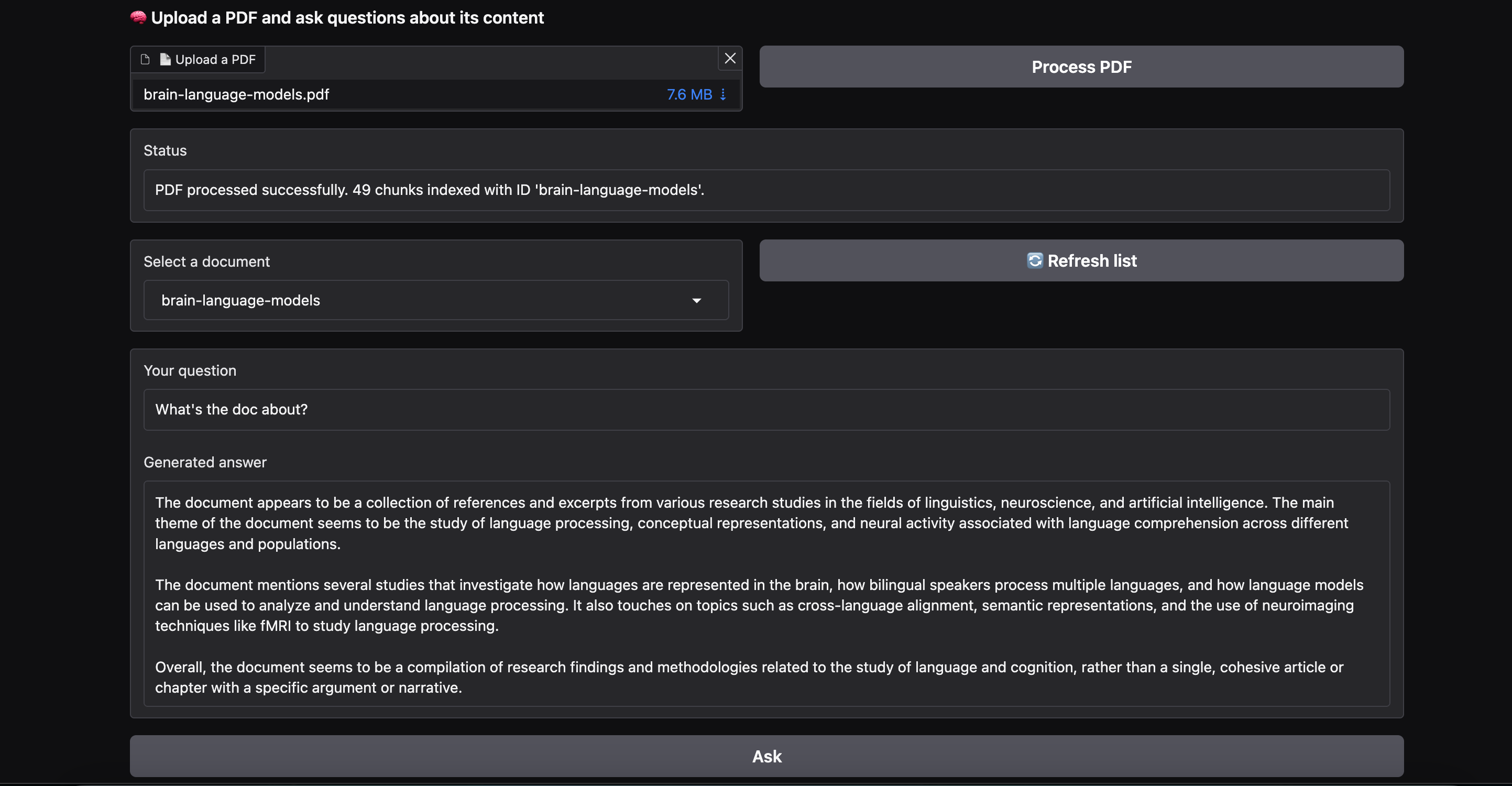

2.6 User Interface (Gradio)

A web UI was built using Gradio, supporting:

- PDF Uploads: Text extraction via

PyMuPDFand processing viaspaCy. - Real-time Feedback: Progress bars for indexing and interactive Q&A.

- Error Handling: Graceful management of subprocess errors and package dependencies.

3. Challenges & Strategies

Challenges Encountered

- Resource Constraints: Balancing model performance with Google Colab's hardware limits; solved by using remote inference for LLaMA 3.3.

- Data Cleaning: Addressing formatting issues in PDFs to ensure reliable chunking.

- Model Evaluation: Comparing MiniLM vs. USE for specific integration needs.

Implemented Strategies

- Query Improvement: Using LLM-driven rewriting to minimize ambiguity.

- Hallucination Mitigation: If the retrieved context is insufficient, the model is instructed to state that the information is unavailable.

- Consistency: Using the identical embedding model for both indexing and live queries.

4. Results and Testing

Embedding & Chunking Tests

While all-MiniLM-L6-v2 showed a slightly higher average similarity ($0.4407$ vs. $0.4232$), USE was selected due to its seamless integration with TensorFlow Hub and superior batch processing reliability.

LLM Performance

Testing confirmed that the system:

- Generates coherent and professional summaries.

- Correctly identifies "out-of-context" questions (e.g., asking about New York tourism when the database contains scientific papers).

5. Live Demo

The system is deployed and accessible at the following link: RAG System Demo on Hugging Face